Understanding the Science of Quality Data Management for Predictable Outcomes

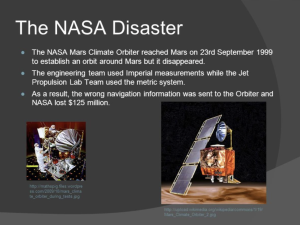

A sobering example of the ramifications of this principle is the tragic case of the Mars Climate Orbiter.

Mars Climate Orbiter.

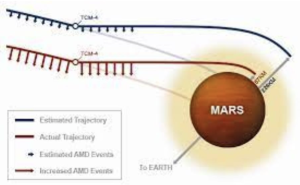

Launched on December 11, 1998, by NASA, the Mars Climate Orbiter was the first robotic probe tasked with the mission of studying climate change on Mars, a celestial body other than Earth. The journey to Mars, despite requiring occasional course corrections, was fairly uneventful. On September 8, 1999, a trajectory correction maneuver, transmitted from Earth, was implemented to position the probe on the most optimal path for orbital insertion. Yet on September 23, 1999, the unthinkable happened. NASA lost communication with the probe, only to discover later that the probe had disintegrated in the Martian atmosphere.

What went wrong for NASA?

The trajectory corrections were entered in English units, rather than the required metric units, leading to the probe being brought too close to Mars, resulting in its destruction.

This tragic mishap is an unmistakable example of the GIGO principle in action. Bad input led to catastrophic output. The Orbiter’s disintegration illustrates how the GIGO metaphor applies to two distinct categories of input issues: type and quality.

Problems of Type

Problems of ‘type’ arise when an incorrect type of input is given. For instance, entering a phone number into a field meant for a credit card number, or in the case of the Mars Climate Orbiter, entering data in English units when metric units were expected.

Problems of Quality

Problems of ‘quality’, on the other hand, occur when the input provided is correct in type, but flawed. A case in point would be entering an incorrect phone number into a phone number field, or a pilot making a maneuver that exceeds the plane’s design tolerance.

Preventing Bad Input

To address the GIGO problem, well-structured systems employ four main strategies.

Firstly, they aim to prevent bad input. Ideally, a system should be designed to verify the accuracy of input data before it is allowed to enter. A web form, for example, should enforce strong data validation on all fields. This ensures that the correct type of data is inputted in the right fields, and in the correct format before submission.

Secondly, if incorrect input still bypasses the initial verification, a well-designed system should be able to detect and correct it before processing the data. For instance, many systems have automated routines that periodically cleanse data, check for duplicates, verify addresses, and ensure proper data formats.

The third line of defense is to prevent bad output. Even when poor-quality input manages to get past the first two barriers, a good system should be capable of detecting and preventing erroneous output before it is produced. Previews are an excellent tool in this regard, as they allow users to verify output and abort the operation if necessary.

Finally, in cases where poor-quality input manages to bypass the first three barriers and generate bad output, the system must then detect and correct this output post-production.

A user-friendly system will make this process as effortless as possible. For example, providing a prepaid return shipping label with online orders can help facilitate returns, thus rectifying any errors.

A Universal Problem

The principle of GIGO is not confined to data and analytics alone. Its lessons permeate various spheres, from improving data input accuracy on websites, to enhancing the reliability and safety of transportation systems, and even to preventing disastrous trajectories of future space missions. Regardless of the context, the essential task remains the same: to take out the “garbage.” As we navigate the increasingly data-driven landscape, understanding and applying the principles of GIGO will be crucial in ensuring reliable, predictable, and successful outcomes.

The principle of GIGO, while simple to comprehend, is challenging to manage in practice. As the complexity and volume of data grow, preventing “garbage” from entering the system becomes increasingly difficult. A key aspect to consider here is data quality management.

Emphasizing Data Quality Management

Organizations often grapple with a plethora of data in varying formats and from diverse sources. In such scenarios, it’s paramount to implement stringent measures that prevent the entry of poor-quality or incorrect data into the system. Robust data validation protocols, which verify the appropriateness, accuracy, and relevance of the data, play a significant role in such situations. This can range from ensuring data is in the correct format and fits within acceptable ranges, to verifying that the data is consistent and does not contain contradictions or inaccuracies.

Moreover, data quality isn’t static, and continuous monitoring and regular audits are critical to maintain the standards of data entering the system. This involves running scheduled processes to cleanse the data, including checks for duplicates, verification of addresses, and

ensuring proper data formats. Such practices help preserve the integrity of data over time, and ensure the output remains relevant and reliable.

Harnessing Technology for Data Validation

In the age of digital transformation, the role of technology cannot be overstated. Advanced AI and machine learning algorithms can be harnessed to detect and correct bad input at the system level before the data is processed. These tools are capable of learning from historical data, identifying patterns, and predicting potential errors.

For instance, they can detect outliers that may signify erroneous data, or understand patterns in data entry to predict and correct potential errors. By doing so, they not only correct bad input but also prevent bad output, ensuring the system’s outcomes are accurate and dependable.

Facilitating User Feedback Loops

Preventing bad output involves more than just technological solutions. It also requires active participation from the users. A well-designed system should facilitate user feedback loops, allowing them to preview and verify the output before it is finalized. This process gives users the opportunity to spot potential errors and make necessary corrections. For example, a user might notice that a transaction amount doesn’t match their expectation, prompting them to check the input data for errors.

Implementing Efficient Error Correction Mechanisms

Despite all the preventive measures, there will be instances when incorrect input bypasses the safeguards, leading to bad output. Therefore, it’s essential to have efficient error correction mechanisms in place. In a data-driven environment, this could mean providing users with simple tools to rectify their input errors, or maintaining a robust customer support system that can assist with troubleshooting. In a physical product scenario, it could mean offering prepaid return shipping labels with online orders to facilitate returns.

Applying GIGO Principle Across Sectors

Beyond the realm of data and analytics, the principle of GIGO has widespread implications. It is a cornerstone concept for error reduction in various fields, from user-interface design to the safety protocols of transportation systems, and even the trajectory calculations for space missions.

In Summary

The principle of Garbage In, Garbage Out serves as a reminder that the foundation of any reliable system lies in the quality of its input.

As we delve deeper into the world of data and analytics, this principle will continue to play a pivotal role in driving the accuracy, reliability, and success of our data-driven endeavours. Hence, whether you are a data scientist, a system designer, or a decision-maker, understanding and applying the principle of GIGO is indispensable.

Read the official NASA report here – https://sma.nasa.gov/docs/default-source/safety-messages/safetymessage-2009-08-01-themarsclimateorbitermishap.pdf

This article is based on the Universal Principles of Design by William Lidwell https://www.linkedin.com/learning/universal-principles-of-design/garbage-in-garbage-out

Latest posts

Latest insights.

Explore the transformative data analytics trends of 2024 that are reshaping businesses. Discover how augmented analytics, AI, real-time data, and more can drive your organization's success.

Discover how Power BI's visualization tools can transform predictive analytics into actionable insights. Learn about interactive dashboards, custom visuals, and real-time data visualization in Power BI.